- Search Menu

- Browse content in Arts and Humanities

- Browse content in Archaeology

- Anglo-Saxon and Medieval Archaeology

- Archaeological Methodology and Techniques

- Archaeology by Region

- Archaeology of Religion

- Archaeology of Trade and Exchange

- Biblical Archaeology

- Contemporary and Public Archaeology

- Environmental Archaeology

- Historical Archaeology

- History and Theory of Archaeology

- Industrial Archaeology

- Landscape Archaeology

- Mortuary Archaeology

- Prehistoric Archaeology

- Underwater Archaeology

- Urban Archaeology

- Zooarchaeology

- Browse content in Architecture

- Architectural Structure and Design

- History of Architecture

- Residential and Domestic Buildings

- Theory of Architecture

- Browse content in Art

- Art Subjects and Themes

- History of Art

- Industrial and Commercial Art

- Theory of Art

- Biographical Studies

- Byzantine Studies

- Browse content in Classical Studies

- Classical History

- Classical Philosophy

- Classical Mythology

- Classical Literature

- Classical Reception

- Classical Art and Architecture

- Classical Oratory and Rhetoric

- Greek and Roman Epigraphy

- Greek and Roman Law

- Greek and Roman Papyrology

- Greek and Roman Archaeology

- Late Antiquity

- Religion in the Ancient World

- Digital Humanities

- Browse content in History

- Colonialism and Imperialism

- Diplomatic History

- Environmental History

- Genealogy, Heraldry, Names, and Honours

- Genocide and Ethnic Cleansing

- Historical Geography

- History by Period

- History of Emotions

- History of Agriculture

- History of Education

- History of Gender and Sexuality

- Industrial History

- Intellectual History

- International History

- Labour History

- Legal and Constitutional History

- Local and Family History

- Maritime History

- Military History

- National Liberation and Post-Colonialism

- Oral History

- Political History

- Public History

- Regional and National History

- Revolutions and Rebellions

- Slavery and Abolition of Slavery

- Social and Cultural History

- Theory, Methods, and Historiography

- Urban History

- World History

- Browse content in Language Teaching and Learning

- Language Learning (Specific Skills)

- Language Teaching Theory and Methods

- Browse content in Linguistics

- Applied Linguistics

- Cognitive Linguistics

- Computational Linguistics

- Forensic Linguistics

- Grammar, Syntax and Morphology

- Historical and Diachronic Linguistics

- History of English

- Language Acquisition

- Language Evolution

- Language Reference

- Language Variation

- Language Families

- Lexicography

- Linguistic Anthropology

- Linguistic Theories

- Linguistic Typology

- Phonetics and Phonology

- Psycholinguistics

- Sociolinguistics

- Translation and Interpretation

- Writing Systems

- Browse content in Literature

- Bibliography

- Children's Literature Studies

- Literary Studies (Asian)

- Literary Studies (European)

- Literary Studies (Eco-criticism)

- Literary Studies (Romanticism)

- Literary Studies (American)

- Literary Studies (Modernism)

- Literary Studies - World

- Literary Studies (1500 to 1800)

- Literary Studies (19th Century)

- Literary Studies (20th Century onwards)

- Literary Studies (African American Literature)

- Literary Studies (British and Irish)

- Literary Studies (Early and Medieval)

- Literary Studies (Fiction, Novelists, and Prose Writers)

- Literary Studies (Gender Studies)

- Literary Studies (Graphic Novels)

- Literary Studies (History of the Book)

- Literary Studies (Plays and Playwrights)

- Literary Studies (Poetry and Poets)

- Literary Studies (Postcolonial Literature)

- Literary Studies (Queer Studies)

- Literary Studies (Science Fiction)

- Literary Studies (Travel Literature)

- Literary Studies (War Literature)

- Literary Studies (Women's Writing)

- Literary Theory and Cultural Studies

- Mythology and Folklore

- Shakespeare Studies and Criticism

- Browse content in Media Studies

- Browse content in Music

- Applied Music

- Dance and Music

- Ethics in Music

- Ethnomusicology

- Gender and Sexuality in Music

- Medicine and Music

- Music Cultures

- Music and Religion

- Music and Media

- Music and Culture

- Music Education and Pedagogy

- Music Theory and Analysis

- Musical Scores, Lyrics, and Libretti

- Musical Structures, Styles, and Techniques

- Musicology and Music History

- Performance Practice and Studies

- Race and Ethnicity in Music

- Sound Studies

- Browse content in Performing Arts

- Browse content in Philosophy

- Aesthetics and Philosophy of Art

- Epistemology

- Feminist Philosophy

- History of Western Philosophy

- Metaphysics

- Moral Philosophy

- Non-Western Philosophy

- Philosophy of Science

- Philosophy of Language

- Philosophy of Mind

- Philosophy of Perception

- Philosophy of Action

- Philosophy of Law

- Philosophy of Religion

- Philosophy of Mathematics and Logic

- Practical Ethics

- Social and Political Philosophy

- Browse content in Religion

- Biblical Studies

- Christianity

- East Asian Religions

- History of Religion

- Judaism and Jewish Studies

- Qumran Studies

- Religion and Education

- Religion and Health

- Religion and Politics

- Religion and Science

- Religion and Law

- Religion and Art, Literature, and Music

- Religious Studies

- Browse content in Society and Culture

- Cookery, Food, and Drink

- Cultural Studies

- Customs and Traditions

- Ethical Issues and Debates

- Hobbies, Games, Arts and Crafts

- Lifestyle, Home, and Garden

- Natural world, Country Life, and Pets

- Popular Beliefs and Controversial Knowledge

- Sports and Outdoor Recreation

- Technology and Society

- Travel and Holiday

- Visual Culture

- Browse content in Law

- Arbitration

- Browse content in Company and Commercial Law

- Commercial Law

- Company Law

- Browse content in Comparative Law

- Systems of Law

- Competition Law

- Browse content in Constitutional and Administrative Law

- Government Powers

- Judicial Review

- Local Government Law

- Military and Defence Law

- Parliamentary and Legislative Practice

- Construction Law

- Contract Law

- Browse content in Criminal Law

- Criminal Procedure

- Criminal Evidence Law

- Sentencing and Punishment

- Employment and Labour Law

- Environment and Energy Law

- Browse content in Financial Law

- Banking Law

- Insolvency Law

- History of Law

- Human Rights and Immigration

- Intellectual Property Law

- Browse content in International Law

- Private International Law and Conflict of Laws

- Public International Law

- IT and Communications Law

- Jurisprudence and Philosophy of Law

- Law and Politics

- Law and Society

- Browse content in Legal System and Practice

- Courts and Procedure

- Legal Skills and Practice

- Primary Sources of Law

- Regulation of Legal Profession

- Medical and Healthcare Law

- Browse content in Policing

- Criminal Investigation and Detection

- Police and Security Services

- Police Procedure and Law

- Police Regional Planning

- Browse content in Property Law

- Personal Property Law

- Study and Revision

- Terrorism and National Security Law

- Browse content in Trusts Law

- Wills and Probate or Succession

- Browse content in Medicine and Health

- Browse content in Allied Health Professions

- Arts Therapies

- Clinical Science

- Dietetics and Nutrition

- Occupational Therapy

- Operating Department Practice

- Physiotherapy

- Radiography

- Speech and Language Therapy

- Browse content in Anaesthetics

- General Anaesthesia

- Neuroanaesthesia

- Browse content in Clinical Medicine

- Acute Medicine

- Cardiovascular Medicine

- Clinical Genetics

- Clinical Pharmacology and Therapeutics

- Dermatology

- Endocrinology and Diabetes

- Gastroenterology

- Genito-urinary Medicine

- Geriatric Medicine

- Infectious Diseases

- Medical Toxicology

- Medical Oncology

- Pain Medicine

- Palliative Medicine

- Rehabilitation Medicine

- Respiratory Medicine and Pulmonology

- Rheumatology

- Sleep Medicine

- Sports and Exercise Medicine

- Clinical Neuroscience

- Community Medical Services

- Critical Care

- Emergency Medicine

- Forensic Medicine

- Haematology

- History of Medicine

- Browse content in Medical Dentistry

- Oral and Maxillofacial Surgery

- Paediatric Dentistry

- Restorative Dentistry and Orthodontics

- Surgical Dentistry

- Browse content in Medical Skills

- Clinical Skills

- Communication Skills

- Nursing Skills

- Surgical Skills

- Medical Ethics

- Medical Statistics and Methodology

- Browse content in Neurology

- Clinical Neurophysiology

- Neuropathology

- Nursing Studies

- Browse content in Obstetrics and Gynaecology

- Gynaecology

- Occupational Medicine

- Ophthalmology

- Otolaryngology (ENT)

- Browse content in Paediatrics

- Neonatology

- Browse content in Pathology

- Chemical Pathology

- Clinical Cytogenetics and Molecular Genetics

- Histopathology

- Medical Microbiology and Virology

- Patient Education and Information

- Browse content in Pharmacology

- Psychopharmacology

- Browse content in Popular Health

- Caring for Others

- Complementary and Alternative Medicine

- Self-help and Personal Development

- Browse content in Preclinical Medicine

- Cell Biology

- Molecular Biology and Genetics

- Reproduction, Growth and Development

- Primary Care

- Professional Development in Medicine

- Browse content in Psychiatry

- Addiction Medicine

- Child and Adolescent Psychiatry

- Forensic Psychiatry

- Learning Disabilities

- Old Age Psychiatry

- Psychotherapy

- Browse content in Public Health and Epidemiology

- Epidemiology

- Public Health

- Browse content in Radiology

- Clinical Radiology

- Interventional Radiology

- Nuclear Medicine

- Radiation Oncology

- Reproductive Medicine

- Browse content in Surgery

- Cardiothoracic Surgery

- Gastro-intestinal and Colorectal Surgery

- General Surgery

- Neurosurgery

- Paediatric Surgery

- Peri-operative Care

- Plastic and Reconstructive Surgery

- Surgical Oncology

- Transplant Surgery

- Trauma and Orthopaedic Surgery

- Vascular Surgery

- Browse content in Science and Mathematics

- Browse content in Biological Sciences

- Aquatic Biology

- Biochemistry

- Bioinformatics and Computational Biology

- Developmental Biology

- Ecology and Conservation

- Evolutionary Biology

- Genetics and Genomics

- Microbiology

- Molecular and Cell Biology

- Natural History

- Plant Sciences and Forestry

- Research Methods in Life Sciences

- Structural Biology

- Systems Biology

- Zoology and Animal Sciences

- Browse content in Chemistry

- Analytical Chemistry

- Computational Chemistry

- Crystallography

- Environmental Chemistry

- Industrial Chemistry

- Inorganic Chemistry

- Materials Chemistry

- Medicinal Chemistry

- Mineralogy and Gems

- Organic Chemistry

- Physical Chemistry

- Polymer Chemistry

- Study and Communication Skills in Chemistry

- Theoretical Chemistry

- Browse content in Computer Science

- Artificial Intelligence

- Computer Architecture and Logic Design

- Game Studies

- Human-Computer Interaction

- Mathematical Theory of Computation

- Programming Languages

- Software Engineering

- Systems Analysis and Design

- Virtual Reality

- Browse content in Computing

- Business Applications

- Computer Security

- Computer Games

- Computer Networking and Communications

- Digital Lifestyle

- Graphical and Digital Media Applications

- Operating Systems

- Browse content in Earth Sciences and Geography

- Atmospheric Sciences

- Environmental Geography

- Geology and the Lithosphere

- Maps and Map-making

- Meteorology and Climatology

- Oceanography and Hydrology

- Palaeontology

- Physical Geography and Topography

- Regional Geography

- Soil Science

- Urban Geography

- Browse content in Engineering and Technology

- Agriculture and Farming

- Biological Engineering

- Civil Engineering, Surveying, and Building

- Electronics and Communications Engineering

- Energy Technology

- Engineering (General)

- Environmental Science, Engineering, and Technology

- History of Engineering and Technology

- Mechanical Engineering and Materials

- Technology of Industrial Chemistry

- Transport Technology and Trades

- Browse content in Environmental Science

- Applied Ecology (Environmental Science)

- Conservation of the Environment (Environmental Science)

- Environmental Sustainability

- Environmentalist Thought and Ideology (Environmental Science)

- Management of Land and Natural Resources (Environmental Science)

- Natural Disasters (Environmental Science)

- Nuclear Issues (Environmental Science)

- Pollution and Threats to the Environment (Environmental Science)

- Social Impact of Environmental Issues (Environmental Science)

- History of Science and Technology

- Browse content in Materials Science

- Ceramics and Glasses

- Composite Materials

- Metals, Alloying, and Corrosion

- Nanotechnology

- Browse content in Mathematics

- Applied Mathematics

- Biomathematics and Statistics

- History of Mathematics

- Mathematical Education

- Mathematical Finance

- Mathematical Analysis

- Numerical and Computational Mathematics

- Probability and Statistics

- Pure Mathematics

- Browse content in Neuroscience

- Cognition and Behavioural Neuroscience

- Development of the Nervous System

- Disorders of the Nervous System

- History of Neuroscience

- Invertebrate Neurobiology

- Molecular and Cellular Systems

- Neuroendocrinology and Autonomic Nervous System

- Neuroscientific Techniques

- Sensory and Motor Systems

- Browse content in Physics

- Astronomy and Astrophysics

- Atomic, Molecular, and Optical Physics

- Biological and Medical Physics

- Classical Mechanics

- Computational Physics

- Condensed Matter Physics

- Electromagnetism, Optics, and Acoustics

- History of Physics

- Mathematical and Statistical Physics

- Measurement Science

- Nuclear Physics

- Particles and Fields

- Plasma Physics

- Quantum Physics

- Relativity and Gravitation

- Semiconductor and Mesoscopic Physics

- Browse content in Psychology

- Affective Sciences

- Clinical Psychology

- Cognitive Psychology

- Cognitive Neuroscience

- Criminal and Forensic Psychology

- Developmental Psychology

- Educational Psychology

- Evolutionary Psychology

- Health Psychology

- History and Systems in Psychology

- Music Psychology

- Neuropsychology

- Organizational Psychology

- Psychological Assessment and Testing

- Psychology of Human-Technology Interaction

- Psychology Professional Development and Training

- Research Methods in Psychology

- Social Psychology

- Browse content in Social Sciences

- Browse content in Anthropology

- Anthropology of Religion

- Human Evolution

- Medical Anthropology

- Physical Anthropology

- Regional Anthropology

- Social and Cultural Anthropology

- Theory and Practice of Anthropology

- Browse content in Business and Management

- Business Strategy

- Business Ethics

- Business History

- Business and Government

- Business and Technology

- Business and the Environment

- Comparative Management

- Corporate Governance

- Corporate Social Responsibility

- Entrepreneurship

- Health Management

- Human Resource Management

- Industrial and Employment Relations

- Industry Studies

- Information and Communication Technologies

- International Business

- Knowledge Management

- Management and Management Techniques

- Operations Management

- Organizational Theory and Behaviour

- Pensions and Pension Management

- Public and Nonprofit Management

- Strategic Management

- Supply Chain Management

- Browse content in Criminology and Criminal Justice

- Criminal Justice

- Criminology

- Forms of Crime

- International and Comparative Criminology

- Youth Violence and Juvenile Justice

- Development Studies

- Browse content in Economics

- Agricultural, Environmental, and Natural Resource Economics

- Asian Economics

- Behavioural Finance

- Behavioural Economics and Neuroeconomics

- Econometrics and Mathematical Economics

- Economic Systems

- Economic History

- Economic Methodology

- Economic Development and Growth

- Financial Markets

- Financial Institutions and Services

- General Economics and Teaching

- Health, Education, and Welfare

- History of Economic Thought

- International Economics

- Labour and Demographic Economics

- Law and Economics

- Macroeconomics and Monetary Economics

- Microeconomics

- Public Economics

- Urban, Rural, and Regional Economics

- Welfare Economics

- Browse content in Education

- Adult Education and Continuous Learning

- Care and Counselling of Students

- Early Childhood and Elementary Education

- Educational Equipment and Technology

- Educational Strategies and Policy

- Higher and Further Education

- Organization and Management of Education

- Philosophy and Theory of Education

- Schools Studies

- Secondary Education

- Teaching of a Specific Subject

- Teaching of Specific Groups and Special Educational Needs

- Teaching Skills and Techniques

- Browse content in Environment

- Applied Ecology (Social Science)

- Climate Change

- Conservation of the Environment (Social Science)

- Environmentalist Thought and Ideology (Social Science)

- Natural Disasters (Environment)

- Social Impact of Environmental Issues (Social Science)

- Browse content in Human Geography

- Cultural Geography

- Economic Geography

- Political Geography

- Browse content in Interdisciplinary Studies

- Communication Studies

- Museums, Libraries, and Information Sciences

- Browse content in Politics

- African Politics

- Asian Politics

- Chinese Politics

- Comparative Politics

- Conflict Politics

- Elections and Electoral Studies

- Environmental Politics

- European Union

- Foreign Policy

- Gender and Politics

- Human Rights and Politics

- Indian Politics

- International Relations

- International Organization (Politics)

- International Political Economy

- Irish Politics

- Latin American Politics

- Middle Eastern Politics

- Political Methodology

- Political Communication

- Political Philosophy

- Political Sociology

- Political Behaviour

- Political Economy

- Political Institutions

- Political Theory

- Politics and Law

- Public Administration

- Public Policy

- Quantitative Political Methodology

- Regional Political Studies

- Russian Politics

- Security Studies

- State and Local Government

- UK Politics

- US Politics

- Browse content in Regional and Area Studies

- African Studies

- Asian Studies

- East Asian Studies

- Japanese Studies

- Latin American Studies

- Middle Eastern Studies

- Native American Studies

- Scottish Studies

- Browse content in Research and Information

- Research Methods

- Browse content in Social Work

- Addictions and Substance Misuse

- Adoption and Fostering

- Care of the Elderly

- Child and Adolescent Social Work

- Couple and Family Social Work

- Developmental and Physical Disabilities Social Work

- Direct Practice and Clinical Social Work

- Emergency Services

- Human Behaviour and the Social Environment

- International and Global Issues in Social Work

- Mental and Behavioural Health

- Social Justice and Human Rights

- Social Policy and Advocacy

- Social Work and Crime and Justice

- Social Work Macro Practice

- Social Work Practice Settings

- Social Work Research and Evidence-based Practice

- Welfare and Benefit Systems

- Browse content in Sociology

- Childhood Studies

- Community Development

- Comparative and Historical Sociology

- Economic Sociology

- Gender and Sexuality

- Gerontology and Ageing

- Health, Illness, and Medicine

- Marriage and the Family

- Migration Studies

- Occupations, Professions, and Work

- Organizations

- Population and Demography

- Race and Ethnicity

- Social Theory

- Social Movements and Social Change

- Social Research and Statistics

- Social Stratification, Inequality, and Mobility

- Sociology of Religion

- Sociology of Education

- Sport and Leisure

- Urban and Rural Studies

- Browse content in Warfare and Defence

- Defence Strategy, Planning, and Research

- Land Forces and Warfare

- Military Administration

- Military Life and Institutions

- Naval Forces and Warfare

- Other Warfare and Defence Issues

- Peace Studies and Conflict Resolution

- Weapons and Equipment

- < Previous chapter

- Next chapter >

35 Scientific Thinking and Reasoning

Kevin N. Dunbar, Department of Human Development and Quantitative Methodology, University of Maryland, College Park, MD

David Klahr, Department of Psychology, Carnegie Mellon University, Pittsburgh, PA

- Published: 21 November 2012

- Cite Icon Cite

- Permissions Icon Permissions

Scientific thinking refers to both thinking about the content of science and the set of reasoning processes that permeate the field of science: induction, deduction, experimental design, causal reasoning, concept formation, hypothesis testing, and so on. Here we cover both the history of research on scientific thinking and the different approaches that have been used, highlighting common themes that have emerged over the past 50 years of research. Future research will focus on the collaborative aspects of scientific thinking, on effective methods for teaching science, and on the neural underpinnings of the scientific mind.

There is no unitary activity called “scientific discovery”; there are activities of designing experiments, gathering data, inventing and developing observational instruments, formulating and modifying theories, deducing consequences from theories, making predictions from theories, testing theories, inducing regularities and invariants from data, discovering theoretical constructs, and others. — Simon, Langley, & Bradshaw, 1981 , p. 2

What Is Scientific Thinking and Reasoning?

There are two kinds of thinking we call “scientific.” The first, and most obvious, is thinking about the content of science. People are engaged in scientific thinking when they are reasoning about such entities and processes as force, mass, energy, equilibrium, magnetism, atoms, photosynthesis, radiation, geology, or astrophysics (and, of course, cognitive psychology!). The second kind of scientific thinking includes the set of reasoning processes that permeate the field of science: induction, deduction, experimental design, causal reasoning, concept formation, hypothesis testing, and so on. However, these reasoning processes are not unique to scientific thinking: They are the very same processes involved in everyday thinking. As Einstein put it:

The scientific way of forming concepts differs from that which we use in our daily life, not basically, but merely in the more precise definition of concepts and conclusions; more painstaking and systematic choice of experimental material, and greater logical economy. (The Common Language of Science, 1941, reprinted in Einstein, 1950 , p. 98)

Nearly 40 years after Einstein's remarkably insightful statement, Francis Crick offered a similar perspective: that great discoveries in science result not from extraordinary mental processes, but rather from rather common ones. The greatness of the discovery lies in the thing discovered.

I think what needs to be emphasized about the discovery of the double helix is that the path to it was, scientifically speaking, fairly commonplace. What was important was not the way it was discovered , but the object discovered—the structure of DNA itself. (Crick, 1988 , p. 67; emphasis added)

Under this view, scientific thinking involves the same general-purpose cognitive processes—such as induction, deduction, analogy, problem solving, and causal reasoning—that humans apply in nonscientific domains. These processes are covered in several different chapters of this handbook: Rips, Smith, & Medin, Chapter 11 on induction; Evans, Chapter 8 on deduction; Holyoak, Chapter 13 on analogy; Bassok & Novick, Chapter 21 on problem solving; and Cheng & Buehner, Chapter 12 on causality. One might question the claim that the highly specialized procedures associated with doing science in the “real world” can be understood by investigating the thinking processes used in laboratory studies of the sort described in this volume. However, when the focus is on major scientific breakthroughs, rather than on the more routine, incremental progress in a field, the psychology of problem solving provides a rich source of ideas about how such discoveries might occur. As Simon and his colleagues put it:

It is understandable, if ironic, that ‘normal’ science fits … the description of expert problem solving, while ‘revolutionary’ science fits the description of problem solving by novices. It is understandable because scientific activity, particularly at the revolutionary end of the continuum, is concerned with the discovery of new truths, not with the application of truths that are already well-known … it is basically a journey into unmapped terrain. Consequently, it is mainly characterized, as is novice problem solving, by trial-and-error search. The search may be highly selective—but it reaches its goal only after many halts, turnings, and back-trackings. (Simon, Langley, & Bradshaw, 1981 , p. 5)

The research literature on scientific thinking can be roughly categorized according to the two types of scientific thinking listed in the opening paragraph of this chapter: (1) One category focuses on thinking that directly involves scientific content . Such research ranges from studies of young children reasoning about the sun-moon-earth system (Vosniadou & Brewer, 1992 ) to college students reasoning about chemical equilibrium (Davenport, Yaron, Klahr, & Koedinger, 2008 ), to research that investigates collaborative problem solving by world-class researchers in real-world molecular biology labs (Dunbar, 1995 ). (2) The other category focuses on “general” cognitive processes, but it tends to do so by analyzing people's problem-solving behavior when they are presented with relatively complex situations that involve the integration and coordination of several different types of processes, and that are designed to capture some essential features of “real-world” science in the psychology laboratory (Bruner, Goodnow, & Austin, 1956 ; Klahr & Dunbar, 1988 ; Mynatt, Doherty, & Tweney, 1977 ).

There are a number of overlapping research traditions that have been used to investigate scientific thinking. We will cover both the history of research on scientific thinking and the different approaches that have been used, highlighting common themes that have emerged over the past 50 years of research.

A Brief History of Research on Scientific Thinking

Science is often considered one of the hallmarks of the human species, along with art and literature. Illuminating the thought processes used in science thus reveal key aspects of the human mind. The thought processes underlying scientific thinking have fascinated both scientists and nonscientists because the products of science have transformed our world and because the process of discovery is shrouded in mystery. Scientists talk of the chance discovery, the flash of insight, the years of perspiration, and the voyage of discovery. These images of science have helped make the mental processes underlying the discovery process intriguing to cognitive scientists as they attempt to uncover what really goes on inside the scientific mind and how scientists really think. Furthermore, the possibilities that scientists can be taught to think better by avoiding mistakes that have been clearly identified in research on scientific thinking, and that their scientific process could be partially automated, makes scientific thinking a topic of enduring interest.

The cognitive processes underlying scientific discovery and day-to-day scientific thinking have been a topic of intense scrutiny and speculation for almost 400 years (e.g., Bacon, 1620 ; Galilei 1638 ; Klahr 2000 ; Tweney, Doherty, & Mynatt, 1981 ). Understanding the nature of scientific thinking has been a central issue not only for our understanding of science but also for our understating of what it is to be human. Bacon's Novumm Organum in 1620 sketched out some of the key features of the ways that experiments are designed and data interpreted. Over the ensuing 400 years philosophers and scientists vigorously debated about the appropriate methods that scientists should use (see Giere, 1993 ). These debates over the appropriate methods for science typically resulted in the espousal of a particular type of reasoning method, such as induction or deduction. It was not until the Gestalt psychologists began working on the nature of human problem solving, during the 1940s, that experimental psychologists began to investigate the cognitive processes underlying scientific thinking and reasoning.

The Gestalt psychologist Max Wertheimer pioneered the investigation of scientific thinking (of the first type described earlier: thinking about scientific content ) in his landmark book Productive Thinking (Wertheimer, 1945 ). Wertheimer spent a considerable amount of time corresponding with Albert Einstein, attempting to discover how Einstein generated the concept of relativity. Wertheimer argued that Einstein had to overcome the structure of Newtonian physics at each step in his theorizing, and the ways that Einstein actually achieved this restructuring were articulated in terms of Gestalt theories. (For a recent and different account of how Einstein made his discovery, see Galison, 2003 .) We will see later how this process of overcoming alternative theories is an obstacle that both scientists and nonscientists need to deal with when evaluating and theorizing about the world.

One of the first investigations of scientific thinking of the second type (i.e., collections of general-purpose processes operating on complex, abstract, components of scientific thought) was carried out by Jerome Bruner and his colleagues at Harvard (Bruner et al., 1956 ). They argued that a key activity engaged in by scientists is to determine whether a particular instance is a member of a category. For example, a scientist might want to discover which substances undergo fission when bombarded by neutrons and which substances do not. Here, scientists have to discover the attributes that make a substance undergo fission. Bruner et al. saw scientific thinking as the testing of hypotheses and the collecting of data with the end goal of determining whether something is a member of a category. They invented a paradigm where people were required to formulate hypotheses and collect data that test their hypotheses. In one type of experiment, the participants were shown a card such as one with two borders and three green triangles. The participants were asked to determine the concept that this card represented by choosing other cards and getting feedback from the experimenter as to whether the chosen card was an example of the concept. In this case the participant may have thought that the concept was green and chosen a card with two green squares and one border. If the underlying concept was green, then the experimenter would say that the card was an example of the concept. In terms of scientific thinking, choosing a new card is akin to conducting an experiment, and the feedback from the experimenter is similar to knowing whether a hypothesis is confirmed or disconfirmed. Using this approach, Bruner et al. identified a number of strategies that people use to formulate and test hypotheses. They found that a key factor determining which hypothesis-testing strategy that people use is the amount of memory capacity that the strategy takes up (see also Morrison & Knowlton, Chapter 6 ; Medin et al., Chapter 11 ). Another key factor that they discovered was that it was much more difficult for people to discover negative concepts (e.g., not blue) than positive concepts (e.g., blue). Although Bruner et al.'s research is most commonly viewed as work on concepts, they saw their work as uncovering a key component of scientific thinking.

A second early line of research on scientific thinking was developed by Peter Wason and his colleagues (Wason, 1968 ). Like Bruner et al., Wason saw a key component of scientific thinking as being the testing of hypotheses. Whereas Bruner et al. focused on the different types of strategies that people use to formulate hypotheses, Wason focused on whether people adopt a strategy of trying to confirm or disconfirm their hypotheses. Using Popper's ( 1959 ) theory that scientists should try and falsify rather than confirm their hypotheses, Wason devised a deceptively simple task in which participants were given three numbers, such as 2-4-6, and were asked to discover the rule underlying the three numbers. Participants were asked to generate other triads of numbers and the experimenter would tell the participant whether the triad was consistent or inconsistent with the rule. They were told that when they were sure they knew what the rule was they should state it. Most participants began the experiment by thinking that the rule was even numbers increasing by 2. They then attempted to confirm their hypothesis by generating a triad like 8-10-12, then 14-16-18. These triads are consistent with the rule and the participants were told yes, that the triads were indeed consistent with the rule. However, when they proposed the rule—even numbers increasing by 2—they were told that the rule was incorrect. The correct rule was numbers of increasing magnitude! From this research, Wason concluded that people try to confirm their hypotheses, whereas normatively speaking, they should try to disconfirm their hypotheses. One implication of this research is that confirmation bias is not just restricted to scientists but is a general human tendency.

It was not until the 1970s that a general account of scientific reasoning was proposed. Herbert Simon, often in collaboration with Allan Newell, proposed that scientific thinking is a form of problem solving. He proposed that problem solving is a search in a problem space. Newell and Simon's theory of problem solving is discussed in many places in this handbook, usually in the context of specific problems (see especially Bassok & Novick, Chapter 21 ). Herbert Simon, however, devoted considerable time to understanding many different scientific discoveries and scientific reasoning processes. The common thread in his research was that scientific thinking and discovery is not a mysterious magical process but a process of problem solving in which clear heuristics are used. Simon's goal was to articulate the heuristics that scientists use in their research at a fine-grained level. By constructing computer programs that simulated the process of several major scientific discoveries, Simon and colleagues were able to articulate the specific computations that scientists could have used in making those discoveries (Langley, Simon, Bradshaw, & Zytkow, 1987 ; see section on “Computational Approaches to Scientific Thinking”). Particularly influential was Simon and Lea's ( 1974 ) work demonstrating that concept formation and induction consist of a search in two problem spaces: a space of instances and a space of rules. This idea has influenced problem-solving accounts of scientific thinking that will be discussed in the next section.

Overall, the work of Bruner, Wason, and Simon laid the foundations for contemporary research on scientific thinking. Early research on scientific thinking is summarized in Tweney, Doherty and Mynatt's 1981 book On Scientific Thinking , where they sketched out many of the themes that have dominated research on scientific thinking over the past few decades. Other more recent books such as Cognitive Models of Science (Giere, 1993 ), Exploring Science (Klahr, 2000 ), Cognitive Basis of Science (Carruthers, Stich, & Siegal, 2002 ), and New Directions in Scientific and Technical Thinking (Gorman, Kincannon, Gooding, & Tweney, 2004 ) provide detailed analyses of different aspects of scientific discovery. Another important collection is Vosnadiau's handbook on conceptual change research (Vosniadou, 2008 ). In this chapter, we discuss the main approaches that have been used to investigate scientific thinking.

How does one go about investigating the many different aspects of scientific thinking? One common approach to the study of the scientific mind has been to investigate several key aspects of scientific thinking using abstract tasks designed to mimic some essential characteristics of “real-world” science. There have been numerous methodologies that have been used to analyze the genesis of scientific concepts, theories, hypotheses, and experiments. Researchers have used experiments, verbal protocols, computer programs, and analyzed particular scientific discoveries. A more recent development has been to increase the ecological validity of such research by investigating scientists as they reason “live” (in vivo studies of scientific thinking) in their own laboratories (Dunbar, 1995 , 2002 ). From a “Thinking and Reasoning” standpoint the major aspects of scientific thinking that have been most actively investigated are problem solving, analogical reasoning, hypothesis testing, conceptual change, collaborative reasoning, inductive reasoning, and deductive reasoning.

Scientific Thinking as Problem Solving

One of the primary goals of accounts of scientific thinking has been to provide an overarching framework to understand the scientific mind. One framework that has had a great influence in cognitive science is that scientific thinking and scientific discovery can be conceived as a form of problem solving. As noted in the opening section of this chapter, Simon ( 1977 ; Simon, Langley, & Bradshaw, 1981 ) argued that both scientific thinking in general and problem solving in particular could be thought of as a search in a problem space. A problem space consists of all the possible states of a problem and all the operations that a problem solver can use to get from one state to the next. According to this view, by characterizing the types of representations and procedures that people use to get from one state to another it is possible to understand scientific thinking. Thus, scientific thinking can be characterized as a search in various problem spaces (Simon, 1977 ). Simon investigated a number of scientific discoveries by bringing participants into the laboratory, providing the participants with the data that a scientist had access to, and getting the participants to reason about the data and rediscover a scientific concept. He then analyzed the verbal protocols that participants generated and mapped out the types of problem spaces that the participants search in (e.g., Qin & Simon, 1990 ). Kulkarni and Simon ( 1988 ) used a more historical approach to uncover the problem-solving heuristics that Krebs used in his discovery of the urea cycle. Kulkarni and Simon analyzed Krebs's diaries and proposed a set of problem-solving heuristics that he used in his research. They then built a computer program incorporating the heuristics and biological knowledge that Krebs had before he made his discoveries. Of particular importance are the search heuristics that the program uses, which include experimental proposal heuristics and data interpretation heuristics. A key heuristic was an unusualness heuristic that focused on unusual findings, which guided search through a space of theories and a space of experiments.

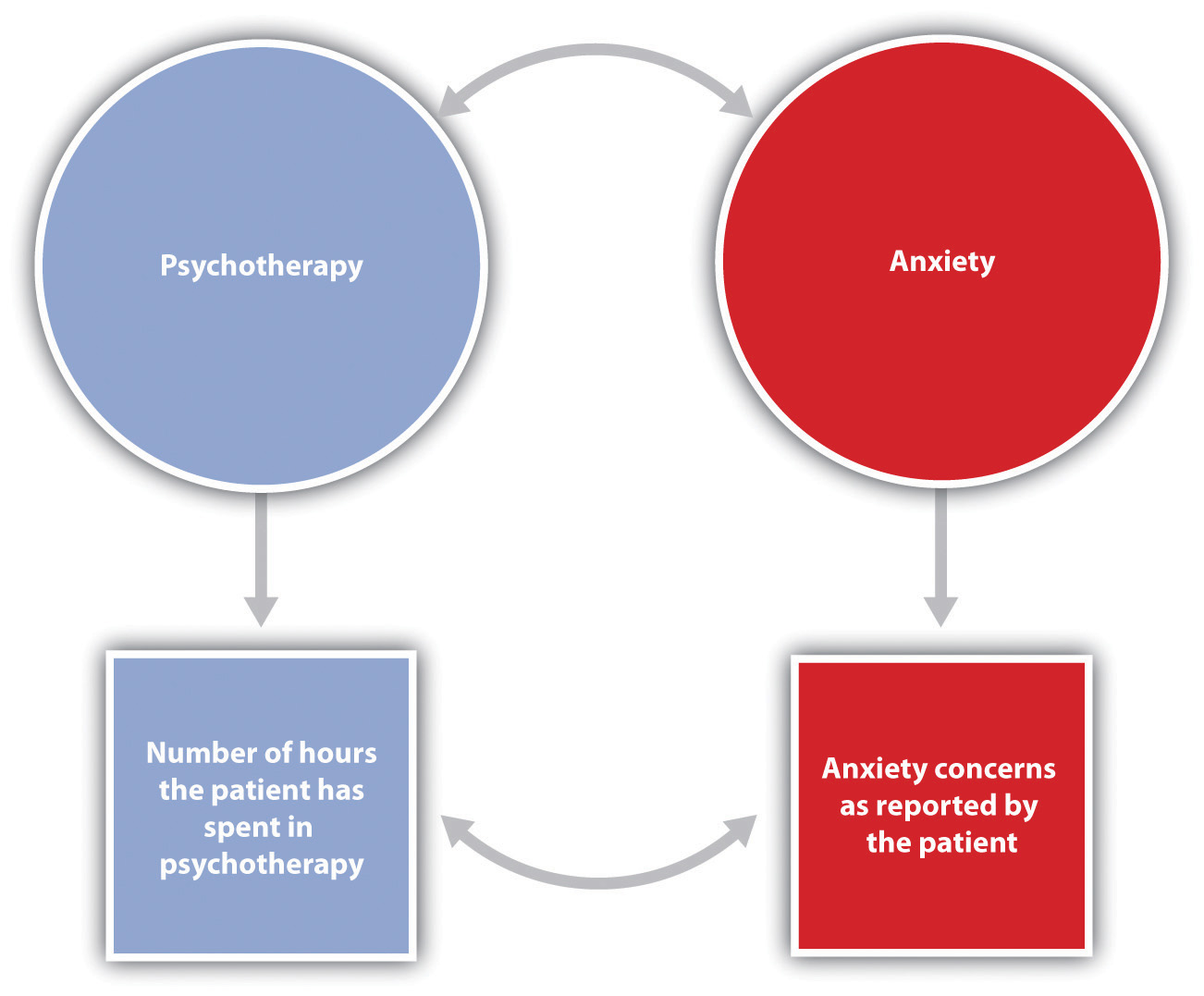

Klahr and Dunbar ( 1988 ) extended the search in a problem space approach and proposed that scientific thinking can be thought of as a search through two related spaces: an hypothesis space and an experiment space. Each problem space that a scientist uses will have its own types of representations and operators used to change the representations. Search in the hypothesis space constrains search in the experiment space. Klahr and Dunbar found that some participants move from the hypothesis space to the experiment space, whereas others move from the experiment space to the hypothesis space. These different types of searches lead to the proposal of different types of hypotheses and experiments. More recent work has extended the dual-space approach to include alternative problem-solving spaces, including those for data, instrumentation, and domain-specific knowledge (Klahr & Simon, 1999 ; Schunn & Klahr, 1995 , 1996 ).

Scientific Thinking as Hypothesis Testing

Many researchers have regarded testing specific hypotheses predicted by theories as one of the key attributes of scientific thinking. Hypothesis testing is the process of evaluating a proposition by collecting evidence regarding its truth. Experimental cognitive research on scientific thinking that specifically examines this issue has tended to fall into two broad classes of investigations. The first class is concerned with the types of reasoning that lead scientists astray, thus blocking scientific ingenuity. A large amount of research has been conducted on the potentially faulty reasoning strategies that both participants in experiments and scientists use, such as considering only one favored hypothesis at a time and how this prevents the scientists from making discoveries. The second class is concerned with uncovering the mental processes underlying the generation of new scientific hypotheses and concepts. This research has tended to focus on the use of analogy and imagery in science, as well as the use of specific types of problem-solving heuristics.

Turning first to investigations of what diminishes scientific creativity, philosophers, historians, and experimental psychologists have devoted a considerable amount of research to “confirmation bias.” This occurs when scientists only consider one hypothesis (typically the favored hypothesis) and ignore other alternative hypotheses or potentially relevant hypotheses. This important phenomenon can distort the design of experiments, formulation of theories, and interpretation of data. Beginning with the work of Wason ( 1968 ) and as discussed earlier, researchers have repeatedly shown that when participants are asked to design an experiment to test a hypothesis they will predominantly design experiments that they think will yield results consistent with the hypothesis. Using the 2-4-6 task mentioned earlier, Klayman and Ha ( 1987 ) showed that in situations where one's hypothesis is likely to be confirmed, seeking confirmation is a normatively incorrect strategy, whereas when the probability of confirming one's hypothesis is low, then attempting to confirm one's hypothesis can be an appropriate strategy. Historical analyses by Tweney ( 1989 ), concerning the way that Faraday made his discoveries, and experiments investigating people testing hypotheses, have revealed that people use a confirm early, disconfirm late strategy: When people initially generate or are given hypotheses, they try and gather evidence that is consistent with the hypothesis. Once enough evidence has been gathered, then people attempt to find the boundaries of their hypothesis and often try to disconfirm their hypotheses.

In an interesting variant on the confirmation bias paradigm, Gorman ( 1989 ) showed that when participants are told that there is the possibility of error in the data that they receive, participants assume that any data that are inconsistent with their favored hypothesis are due to error. Thus, the possibility of error “insulates” hypotheses against disconfirmation. This intriguing hypothesis has not been confirmed by other researchers (Penner & Klahr, 1996 ), but it is an intriguing hypothesis that warrants further investigation.

Confirmation bias is very difficult to overcome. Even when participants are asked to consider alternate hypotheses, they will often fail to conduct experiments that could potentially disconfirm their hypothesis. Tweney and his colleagues provide an excellent overview of this phenomenon in their classic monograph On Scientific Thinking (1981). The precise reasons for this type of block are still widely debated. Researchers such as Michael Doherty have argued that working memory limitations make it difficult for people to consider more than one hypothesis. Consistent with this view, Dunbar and Sussman ( 1995 ) have shown that when participants are asked to hold irrelevant items in working memory while testing hypotheses, the participants will be unable to switch hypotheses in the face of inconsistent evidence. While working memory limitations are involved in the phenomenon of confirmation bias, even groups of scientists can also display confirmation bias. For example, the controversy over cold fusion is an example of confirmation bias. Here, large groups of scientists had other hypotheses available to explain their data yet maintained their hypotheses in the face of other more standard alternative hypotheses. Mitroff ( 1974 ) provides some interesting examples of NASA scientists demonstrating confirmation bias, which highlight the roles of commitment and motivation in this process. See also MacPherson and Stanovich ( 2007 ) for specific strategies that can be used to overcome confirmation bias.

Causal Thinking in Science

Much of scientific thinking and scientific theory building pertains to the development of causal models between variables of interest. For example, do vaccines cause illnesses? Do carbon dioxide emissions cause global warming? Does water on a planet indicate that there is life on the planet? Scientists and nonscientists alike are constantly bombarded with statements regarding the causal relationship between such variables. How does one evaluate the status of such claims? What kinds of data are informative? How do scientists and nonscientists deal with data that are inconsistent with their theory?

A central issue in the causal reasoning literature, one that is directly relevant to scientific thinking, is the extent to which scientists and nonscientists alike are governed by the search for causal mechanisms (i.e., how a variable works) versus the search for statistical data (i.e., how often variables co-occur). This dichotomy can be boiled down to the search for qualitative versus quantitative information about the paradigm the scientist is investigating. Researchers from a number of cognitive psychology laboratories have found that people prefer to gather more information about an underlying mechanism than covariation between a cause and an effect (e.g., Ahn, Kalish, Medin, & Gelman, 1995 ). That is, the predominant strategy that students in simulations of scientific thinking use is to gather as much information as possible about how the objects under investigation work, rather than collecting large amounts of quantitative data to determine whether the observations hold across multiple samples. These findings suggest that a central component of scientific thinking may be to formulate explicit mechanistic causal models of scientific events.

One type of situation in which causal reasoning has been observed extensively is when scientists obtain unexpected findings. Both historical and naturalistic research has revealed that reasoning causally about unexpected findings plays a central role in science. Indeed, scientists themselves frequently state that a finding was due to chance or was unexpected. Given that claims of unexpected findings are such a frequent component of scientists' autobiographies and interviews in the media, Dunbar ( 1995 , 1997 , 1999 ; Dunbar & Fugelsang, 2005 ; Fugelsang, Stein, Green, & Dunbar, 2004 ) decided to investigate the ways that scientists deal with unexpected findings. In 1991–1992 Dunbar spent 1 year in three molecular biology laboratories and one immunology laboratory at a prestigious U.S. university. He used the weekly laboratory meeting as a source of data on scientific discovery and scientific reasoning. (He termed this type of study “in vivo” cognition.) When he looked at the types of findings that the scientists made, he found that over 50% of the findings were unexpected and that these scientists had evolved a number of effective strategies for dealing with such findings. One clear strategy was to reason causally about the findings: Scientists attempted to build causal models of their unexpected findings. This causal model building results in the extensive use of collaborative reasoning, analogical reasoning, and problem-solving heuristics (Dunbar, 1997 , 2001 ).

Many of the key unexpected findings that scientists reasoned about in the in vivo studies of scientific thinking were inconsistent with the scientists' preexisting causal models. A laboratory equivalent of the biology labs involved creating a situation in which students obtained unexpected findings that were inconsistent with their preexisting theories. Dunbar and Fugelsang ( 2005 ) examined this issue by creating a scientific causal thinking simulation where experimental outcomes were either expected or unexpected. Dunbar ( 1995 ) has called the study of people reasoning in a cognitive laboratory “in vitro” cognition. These investigators found that students spent considerably more time reasoning about unexpected findings than expected findings. In addition, when assessing the overall degree to which their hypothesis was supported or refuted, participants spent the majority of their time considering unexpected findings. An analysis of participants' verbal protocols indicates that much of this extra time was spent formulating causal models for the unexpected findings. Similarly, scientists spend more time considering unexpected than expected findings, and this time is devoted to building causal models (Dunbar & Fugelsang, 2004 ).

Scientists know that unexpected findings occur often, and they have developed many strategies to take advantage of their unexpected findings. One of the most important places that they anticipate the unexpected is in designing experiments (Baker & Dunbar, 2000 ). They build different causal models of their experiments incorporating many conditions and controls. These multiple conditions and controls allow unknown mechanisms to manifest themselves. Thus, rather than being the victims of the unexpected, they create opportunities for unexpected events to occur, and once these events do occur, they have causal models that allow them to determine exactly where in the causal chain their unexpected finding arose. The results of these in vivo and in vitro studies all point to a more complex and nuanced account of how scientists and nonscientists alike test and evaluate hypotheses about theories.

The Roles of Inductive, Abductive, and Deductive Thinking in Science

One of the most basic characteristics of science is that scientists assume that the universe that we live in follows predictable rules. Scientists reason using a variety of different strategies to make new scientific discoveries. Three frequently used types of reasoning strategies that scientists use are inductive, abductive, and deductive reasoning. In the case of inductive reasoning, a scientist may observe a series of events and try to discover a rule that governs the event. Once a rule is discovered, scientists can extrapolate from the rule to formulate theories of observed and yet-to-be-observed phenomena. One example is the discovery using inductive reasoning that a certain type of bacterium is a cause of many ulcers (Thagard, 1999 ). In a fascinating series of articles, Thagard documented the reasoning processes that Marshall and Warren went through in proposing this novel hypothesis. One key reasoning process was the use of induction by generalization. Marshall and Warren noted that almost all patients with gastric entritis had a spiral bacterium in their stomachs, and he formed the generalization that this bacterium is the cause of stomach ulcers. There are numerous other examples of induction by generalization in science, such as Tycho De Brea's induction about the motion of planets from his observations, Dalton's use of induction in chemistry, and the discovery of prions as the source of mad cow disease. Many theories of induction have used scientific discovery and reasoning as examples of this important reasoning process.

Another common type of inductive reasoning is to map a feature of one member of a category to another member of a category. This is called categorical induction. This type of induction is a way of projecting a known property of one item onto another item that is from the same category. Thus, knowing that the Rous Sarcoma virus is a retrovirus that uses RNA rather than DNA, a biologist might assume that another virus that is thought to be a retrovirus also uses RNA rather than DNA. While research on this type of induction typically has not been discussed in accounts of scientific thinking, this type of induction is common in science. For an influential contribution to this literature, see Smith, Shafir, and Osherson ( 1993 ), and for reviews of this literature see Heit ( 2000 ) and Medin et al. (Chapter 11 ).

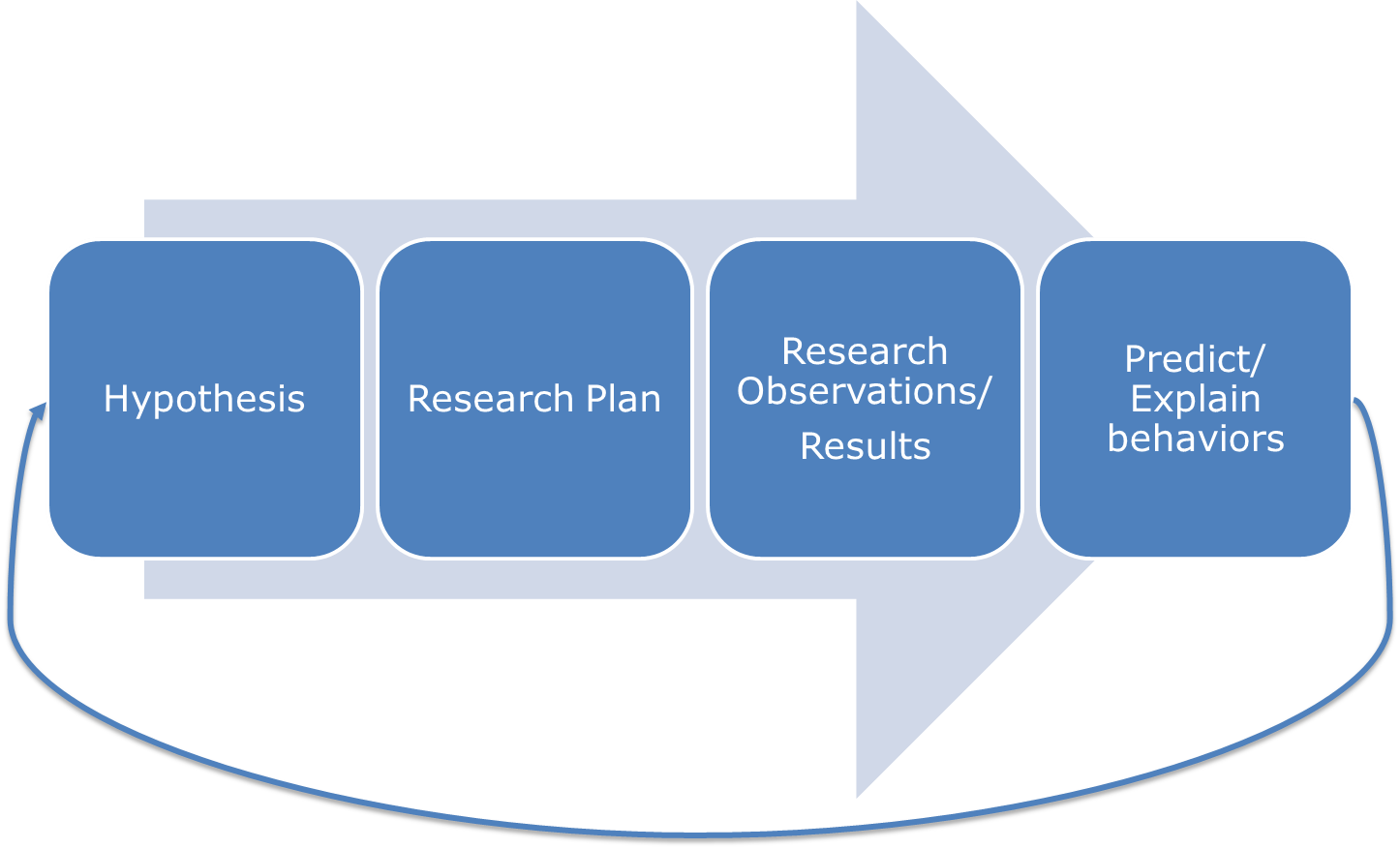

While less commonly mentioned than inductive reasoning, abductive reasoning is an important form of reasoning that scientists use when they are seeking to propose explanations for events such as unexpected findings (see Lombrozo, Chapter 14 ; Magnani, et al., 2010 ). In Figure 35.1 , taken from King ( 2011 ), the differences between inductive, abductive, and deductive thinking are highlighted. In the case of abduction, the reasoner attempts to generate explanations of the form “if situation X had occurred, could it have produced the current evidence I am attempting to interpret?” (For an interesting of analysis of abductive reasoning see the brief paper by Klahr & Masnick, 2001 ). Of course, as in classical induction, such reasoning may produce a plausible account that is still not the correct one. However, abduction does involve the generation of new knowledge, and is thus also related to research on creativity.

The different processes underlying inductive, abductive, and deductive reasoning in science. (Figure reproduced from King 2011 ).)

Turning now to deductive thinking, many thinking processes that scientists adhere to follow traditional rules of deductive logic. These processes correspond to those conditions in which a hypothesis may lead to, or is deducible to, a conclusion. Though they are not always phrased in syllogistic form, deductive arguments can be phrased as “syllogisms,” or as brief, mathematical statements in which the premises lead to the conclusion. Deductive reasoning is an extremely important aspect of scientific thinking because it underlies a large component of how scientists conduct their research. By looking at many scientific discoveries, we can often see that deductive reasoning is at work. Deductive reasoning statements all contain information or rules that state an assumption about how the world works, as well as a conclusion that would necessarily follow from the rule. Numerous discoveries in physics such as the discovery of dark matter by Vera Rubin are based on deductions. In the dark matter case, Rubin measured galactic rotation curves and based on the differences between the predicted and observed angular motions of galaxies she deduced that the structure of the universe was uneven. This led her to propose that dark matter existed. In contemporary physics the CERN Large Hadron Collider is being used to search for the Higgs Boson. The Higgs Boson is a deductive prediction from contemporary physics. If the Higgs Boson is not found, it may lead to a radical revision of the nature of physics and a new understanding of mass (Hecht, 2011 ).

The Roles of Analogy in Scientific Thinking

One of the most widely mentioned reasoning processes used in science is analogy. Scientists use analogies to form a bridge between what they already know and what they are trying to explain, understand, or discover. In fact, many scientists have claimed that the making of certain analogies was instrumental in their making a scientific discovery, and almost all scientific autobiographies and biographies feature one particular analogy that is discussed in depth. Coupled with the fact that there has been an enormous research program on analogical thinking and reasoning (see Holyoak, Chapter 13 ), we now have a number of models and theories of analogical reasoning that suggest how analogy can play a role in scientific discovery (see Gentner, Holyoak, & Kokinov, 2001 ). By analyzing several major discoveries in the history of science, Thagard and Croft ( 1999 ), Nersessian ( 1999 , 2008 ), and Gentner and Jeziorski ( 1993 ) have all shown that analogical reasoning is a key aspect of scientific discovery.

Traditional accounts of analogy distinguish between two components of analogical reasoning: the target and the source (Holyoak, Chapter 13 ; Gentner 2010 ). The target is the concept or problem that a scientist is attempting to explain or solve. The source is another piece of knowledge that the scientist uses to understand the target or to explain the target to others. What the scientist does when he or she makes an analogy is to map features of the source onto features of the target. By mapping the features of the source onto the target, new features of the target may be discovered, or the features of the target may be rearranged so that a new concept is invented and a scientific discovery is made. For example, a common analogy that is used with computers is to describe a harmful piece of software as a computer virus. Once a piece of software is called a virus, people can map features of biological viruses, such as that it is small, spreads easily, self-replicates using a host, and causes damage. People not only map individual features of the source onto the target but also the systems of relations. For example, if a computer virus is similar to a biological virus, then an immune system can be created on computers that can protect computers from future variants of a virus. One of the reasons that scientific analogy is so powerful is that it can generate new knowledge, such as the creation of a computational immune system having many of the features of a real biological immune system. This analogy also leads to predictions that there will be newer computer viruses that are the computational equivalent of retroviruses, lacking DNA, or standard instructions, that will elude the computational immune system.

The process of making an analogy involves a number of key steps: retrieval of a source from memory, aligning the features of the source with those of the target, mapping features of the source onto those of the target, and possibly making new inferences about the target. Scientific discoveries are made when the source highlights a hitherto unknown feature of the target or restructures the target into a new set of relations. Interestingly, research on analogy has shown that participants do not easily use remote analogies (see Gentner et al., 1997 ; Holyoak & Thagard 1995 ). Participants in experiments tend to focus on the sharing of a superficial feature between the source and the target, rather than the relations among features. In his in vivo studies of science, Dunbar ( 1995 , 2001 , 2002 ) investigated the ways that scientists use analogies while they are conducting their research and found that scientists use both relational and superficial features when they make analogies. Whether they use superficial or relational features depends on their goals. If their goal is to fix a problem in an experiment, their analogies are based upon superficial features. However, if their goal is to formulate hypotheses, they focus on analogies based upon sets of relations. One important difference between scientists and participants in experiments is that the scientists have deep relational knowledge of the processes that they are investigating and can hence use this relational knowledge to make analogies (see Holyoak, Chapter 13 for a thorough review of analogical reasoning).

Are scientific analogies always useful? Sometimes analogies can lead scientists and students astray. For example, Evelyn Fox-Keller ( 1985 ) shows how an analogy between the pulsing of a lighthouse and the activity of the slime mold dictyostelium led researchers astray for a number of years. Likewise, the analogy between the solar system (the source) and the structure of the atom (the target) has been shown to be potentially misleading to students taking more advanced courses in physics or chemistry. The solar system analogy has a number of misalignments to the structure of the atom, such as electrons being repelled from each other rather than attracted; moreover, electrons do not have individual orbits like planets but have orbit clouds of electron density. Furthermore, students have serious misconceptions about the nature of the solar system, which can compound their misunderstanding of the nature of the atom (Fischler & Lichtfeld, 1992 ). While analogy is a powerful tool in science, like all forms of induction, incorrect conclusions can be reached.

Conceptual Change in Science

Scientific knowledge continually accumulates as scientists gather evidence about the natural world. Over extended time, this knowledge accumulation leads to major revisions, extensions, and new organizational forms for expressing what is known about nature. Indeed, these changes are so substantial that philosophers of science speak of “revolutions” in a variety of scientific domains (Kuhn, 1962 ). The psychological literature that explores the idea of revolutionary conceptual change can be roughly divided into (a) investigations of how scientists actually make discoveries and integrate those discoveries into existing scientific contexts, and (b) investigations of nonscientists ranging from infants, to children, to students in science classes. In this section we summarize the adult studies of conceptual change, and in the next section we look at its developmental aspects.

Scientific concepts, like all concepts, can be characterized as containing a variety of “knowledge elements”: representations of words, thoughts, actions, objects, and processes. At certain points in the history of science, the accumulated evidence has demanded major shifts in the way these collections of knowledge elements are organized. This “radical conceptual change” process (see Keil, 1999 ; Nersessian 1998 , 2002 ; Thagard, 1992 ; Vosniadou 1998, for reviews) requires the formation of a new conceptual system that organizes knowledge in new ways, adds new knowledge, and results in a very different conceptual structure. For more recent research on conceptual change, The International Handbook of Research on Conceptual Change (Vosniadou, 2008 ) provides a detailed compendium of theories and controversies within the field.

While conceptual change in science is usually characterized by large-scale changes in concepts that occur over extensive periods of time, it has been possible to observe conceptual change using in vivo methodologies. Dunbar ( 1995 ) reported a major conceptual shift that occurred in immunologists, where they obtained a series of unexpected findings that forced the scientists to propose a new concept in immunology that in turn forced the change in other concepts. The drive behind this conceptual change was the discovery of a series of different unexpected findings or anomalies that required the scientists to both revise and reorganize their conceptual knowledge. Interestingly, this conceptual change was achieved by a group of scientists reasoning collaboratively, rather than by a scientist working alone. Different scientists tend to work on different aspects of concepts, and also different concepts, that when put together lead to a rapid change in entire conceptual structures.

Overall, accounts of conceptual change in individuals indicate that it is indeed similar to that of conceptual change in entire scientific fields. Individuals need to be confronted with anomalies that their preexisting theories cannot explain before entire conceptual structures are overthrown. However, replacement conceptual structures have to be generated before the old conceptual structure can be discarded. Sometimes, people do not overthrow their original conceptual theories and through their lives maintain their original views of many fundamental scientific concepts. Whether people actively possess naive theories, or whether they appear to have a naive theory because of the demand characteristics of the testing context, is a lively source of debate within the science education community (see Gupta, Hammer, & Redish, 2010 ).

Scientific Thinking in Children

Well before their first birthday, children appear to know several fundamental facts about the physical world. For example, studies with infants show that they behave as if they understand that solid objects endure over time (e.g., they don't just disappear and reappear, they cannot move through each other, and they move as a result of collisions with other solid objects or the force of gravity (Baillargeon, 2004 ; Carey 1985 ; Cohen & Cashon, 2006 ; Duschl, Schweingruber, & Shouse, 2007 ; Gelman & Baillargeon, 1983 ; Gelman & Kalish, 2006 ; Mandler, 2004 ; Metz 1995 ; Munakata, Casey, & Diamond, 2004 ). And even 6-month-olds are able to predict the future location of a moving object that they are attempting to grasp (Von Hofsten, 1980 ; Von Hofsten, Feng, & Spelke, 2000 ). In addition, they appear to be able to make nontrivial inferences about causes and their effects (Gopnik et al., 2004 ).

The similarities between children's thinking and scientists' thinking have an inherent allure and an internal contradiction. The allure resides in the enthusiastic wonder and openness with which both children and scientists approach the world around them. The paradox comes from the fact that different investigators of children's thinking have reached diametrically opposing conclusions about just how “scientific” children's thinking really is. Some claim support for the “child as a scientist” position (Brewer & Samarapungavan, 1991 ; Gelman & Wellman, 1991 ; Gopnik, Meltzoff, & Kuhl, 1999 ; Karmiloff-Smith 1988 ; Sodian, Zaitchik, & Carey, 1991 ; Samarapungavan 1992 ), while others offer serious challenges to the view (Fay & Klahr, 1996 ; Kern, Mirels, & Hinshaw, 1983 ; Kuhn, Amsel, & O'Laughlin, 1988 ; Schauble & Glaser, 1990 ; Siegler & Liebert, 1975 .) Such fundamentally incommensurate conclusions suggest that this very field—children's scientific thinking—is ripe for a conceptual revolution!

A recent comprehensive review (Duschl, Schweingruber, & Shouse, 2007 ) of what children bring to their science classes offers the following concise summary of the extensive developmental and educational research literature on children's scientific thinking:

Children entering school already have substantial knowledge of the natural world, much of which is implicit.

What children are capable of at a particular age is the result of a complex interplay among maturation, experience, and instruction. What is developmentally appropriate is not a simple function of age or grade, but rather is largely contingent on children's prior opportunities to learn.

Students' knowledge and experience play a critical role in their science learning, influencing four aspects of science understanding, including (a) knowing, using, and interpreting scientific explanations of the natural world; (b) generating and evaluating scientific evidence and explanations, (c) understanding how scientific knowledge is developed in the scientific community, and (d) participating in scientific practices and discourse.

Students learn science by actively engaging in the practices of science.

In the previous section of this article we discussed conceptual change with respect to scientific fields and undergraduate science students. However, the idea that children undergo radical conceptual change in which old “theories” need to be overthrown and reorganized has been a central topic in understanding changes in scientific thinking in both children and across the life span. This radical conceptual change is thought to be necessary for acquiring many new concepts in physics and is regarded as the major source of difficulty for students. The factors that are at the root of this conceptual shift view have been difficult to determine, although there have been a number of studies in cognitive development (Carey, 1985 ; Chi 1992 ; Chi & Roscoe, 2002 ), in the history of science (Thagard, 1992 ), and in physics education (Clement, 1982 ; Mestre 1991 ) that give detailed accounts of the changes in knowledge representation that occur while people switch from one way of representing scientific knowledge to another.

One area where students show great difficulty in understanding scientific concepts is physics. Analyses of students' changing conceptions, using interviews, verbal protocols, and behavioral outcome measures, indicate that large-scale changes in students' concepts occur in physics education (see McDermott & Redish, 1999 , for a review of this literature). Following Kuhn ( 1962 ), many researchers, but not all, have noted that students' changing conceptions resemble the sequences of conceptual changes in physics that have occurred in the history of science. These notions of radical paradigm shifts and ensuing incompatibility with past knowledge-states have called attention to interesting parallels between the development of particular scientific concepts in children and in the history of physics. Investigations of nonphysicists' understanding of motion indicate that students have extensive misunderstandings of motion. Some researchers have interpreted these findings as an indication that many people hold erroneous beliefs about motion similar to a medieval “impetus” theory (McCloskey, Caramazza, & Green, 1980 ). Furthermore, students appear to maintain “impetus” notions even after one or two courses in physics. In fact, some authors have noted that students who have taken one or two courses in physics can perform worse on physics problems than naive students (Mestre, 1991 ). Thus, it is only after extensive learning that we see a conceptual shift from impetus theories of motion to Newtonian scientific theories.

How one's conceptual representation shifts from “naive” to Newtonian is a matter of contention, as some have argued that the shift involves a radical conceptual change, whereas others have argued that the conceptual change is not really complete. For example, Kozhevnikov and Hegarty ( 2001 ) argue that much of the naive impetus notions of motion are maintained at the expense of Newtonian principles even with extensive training in physics. However, they argue that such impetus principles are maintained at an implicit level. Thus, although students can give the correct Newtonian answer to problems, their reaction times to respond indicate that they are also using impetus theories when they respond. An alternative view of conceptual change focuses on whether there are real conceptual changes at all. Gupta, Hammer and Redish ( 2010 ) and Disessa ( 2004 ) have conducted detailed investigations of changes in physics students' accounts of phenomena covered in elementary physics courses. They have found that rather than students possessing a naive theory that is replaced by the standard theory, many introductory physics students have no stable physical theory but rather construct their explanations from elementary pieces of knowledge of the physical world.

Computational Approaches to Scientific Thinking

Computational approaches have provided a more complete account of the scientific mind. Computational models provide specific detailed accounts of the cognitive processes underlying scientific thinking. Early computational work consisted of taking a scientific discovery and building computational models of the reasoning processes involved in the discovery. Langley, Simon, Bradshaw, and Zytkow ( 1987 ) built a series of programs that simulated discoveries such as those of Copernicus, Bacon, and Stahl. These programs had various inductive reasoning algorithms built into them, and when given the data that the scientists used, they were able to propose the same rules. Computational models make it possible to propose detailed models of the cognitive subcomponents of scientific thinking that specify exactly how scientific theories are generated, tested, and amended (see Darden, 1997 , and Shrager & Langley, 1990 , for accounts of this branch of research). More recently, the incorporation of scientific knowledge into computer programs has resulted in a shift in emphasis from using programs to simulate discoveries to building programs that are used to help scientists make discoveries. A number of these computer programs have made novel discoveries. For example, Valdes-Perez ( 1994 ) has built systems for discoveries in chemistry, and Fajtlowicz has done this in mathematics (Erdos, Fajtlowicz, & Staton, 1991 ).

These advances in the fields of computer discovery have led to new fields, conferences, journals, and even departments that specialize in the development of programs devised to search large databases in the hope of making new scientific discoveries (Langley, 2000 , 2002 ). This process is commonly known as “data mining.” This approach has only proved viable relatively recently, due to advances in computer technology. Biswal et al. ( 2010 ), Mitchell ( 2009 ), and Yang ( 2009 ) provide recent reviews of data mining in different scientific fields. Data mining is at the core of drug discovery, our understanding of the human genome, and our understanding of the universe for a number of reasons. First, vast databases concerning drug actions, biological processes, the genome, the proteome, and the universe itself now exist. Second, the development of high throughput data-mining algorithms makes it possible to search for new drug targets, novel biological mechanisms, and new astronomical phenomena in relatively short periods of time. Research programs that took decades, such as the development of penicillin, can now be done in days (Yang, 2009 ).

Another recent shift in the use of computers in scientific discovery has been to have both computers and people make discoveries together, rather than expecting that computers make an entire scientific discovery. Now instead of using computers to mimic the entire scientific discovery process as used by humans, computers can use powerful algorithms that search for patterns on large databases and provide the patterns to humans who can then use the output of these computers to make discoveries, ranging from the human genome to the structure of the universe. However, there are some robots such as ADAM, developed by King ( 2011 ), that can actually perform the entire scientific process, from the generation of hypotheses, to the conduct of experiments and the interpretation of results, with little human intervention. The ongoing development of scientific robots by some scientists (King et al., 2009 ) thus continues the tradition started by Herbert Simon in the 1960s. However, many of the controversies as to whether the robot is a “real scientist” or not continue to the present (Evans & Rzhetsky, 2010 , Gianfelici, 2010 ; Haufe, Elliott, Burian, & O' Malley, 2010 ; O'Malley 2011 ).

Scientific Thinking and Science Education